In-Depth

Microsoft's Massive Azure Extension into the Enterprise

Now that Windows Server 2016 is available, Microsoft says IT pros and developers can build and extend containerized workloads and applications to its network- and compute-enhanced Azure public cloud -- and vice versa.

Since the launch of Microsoft Azure back in early 2010, Microsoft has consistently emphasized its goal of bringing parity between the datacenter and cloud, a prospect easier said than done, especially because infrastructure services didn't arrive until many years later. Microsoft first underscored hybrid clouds as the way to get there from the beginning with Windows Azure Connect, a tool allowing connectivity from Microsoft-based endpoints to its public cloud. The company took a further step with the release of Windows Server 2012, which it dubbed Cloud OS for its ability to bridge datacenter workloads to Azure. While Microsoft would like to see organizations move the vast majority of their workloads to Azure over time, the company has determined the server OS had to go beyond merely allowing customers to just move existing applications and data to Azure. The next server OS needed a runtime environment and multitenant architecture attributes, security and the ability to allow virtual machines (VMs) and applications to run on-premises and in Azure with the same properties, which became the core focus of Windows Server 2016.

After an extensive two-year preview cycle consisting of five beta releases, Microsoft has finally delivered Windows Server 2016, a much more extensible version of the OS for hybrid cloud environments, bringing it closer to the vision outlined in 2010. Not to be mistaken for next year's anticipated launch of Azure Stack, Windows Server 2016 offers the best path for those looking to transition or share existing and new workload and application stacks to Azure.

"We think of Windows Server 2016 in many ways as the edge of our Azure cloud, and one of the things that we recommend you think of is Azure as the edge of all your on-premises servers," said Scott Guthrie, executive VP of Microsoft's Cloud and Enterprise group, announcing the official release of the OS during the opening keynote session at the Ignite conference in Atlanta. "Windows Server 2016 is a major enhancement of Windows Server. It's cloud-ready and incorporates a lot of the deep learnings that we've had running our own cloud with Azure, including the core capabilities that you need to run a software-defined datacenter."

Many of the new features in Windows Server 2016, outlined in various first-look articles in Redmond magazine over the release cycles of the technical previews, include support for software-defined network (SDN) infrastructure, more resilient Storage Spaces, support for Windows Server and Hyper-V containers, multi-tenant security via the Shielded Hyper-V VM feature, and the Nano Server headless deployment configuration option, which provides a much smaller server OS footprint that allows you to source packages from repositories on either a local path or from the cloud.

Over time, Microsoft and others believe the Nano Server option -- and similar types of server core lightweight configurations -- could be the server deployment method of the future. According to Microsoft, the Nano Server option offers a higher-density and more efficient resource utilization of the OS. This is important when configuring private cloud infrastructure, notably clustered Hyper-V, storage and core networking, or for deployment as an application platform for running cloud-scale apps based on containers and micro-services architectures. Despite the potential for the Nano Server configuration option, its usefulness for now is limited as most commercial applications can't currently run on it because it lacks some critical APIs, as explained by Redmond contributor Brien Posey, though he noted there are some tools that IT pros can use as a shim for some applications.

Azure-Based Windows Server Management

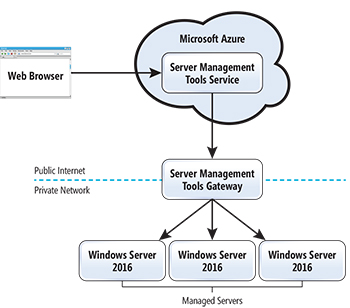

Most noteworthy -- and a key reason Guthrie describes Windows Server as the edge of Azure and vice versa -- is the new Windows Server 2016 Azure-based Remote Server Management Tools (RSMT) option, which promises to be used in tandem with the Nano Server deployment option. While it wasn't clear if RSMT would be available upon the release of Windows Server 2016 in our June first look at the RSMT preview, Microsoft confirmed key components of the tools can be found in the Azure Marketplace. RSMT lets administrators manage any Windows Server 2016 servers running on-premises, as well as VMs in Azure. As Server Core and Nano Server options become more prevalent -- which Microsoft and others predict will become the norm over time -- traditional tools such as Server Manager or Microsoft Management Console (MMC) snap-ins won't work with the headless servers. RSMT uses a gateway architecture to allow for remote management of Windows Server 2016 machines and VMs on-premises. When creating a connection to the machines, administrators also configure and create a connection to the gateway machine (see Figure 1).

[Click on image for larger view.]

Figure 1. The Remote Server Management Tools (RSMT) and Microsoft Azure gateway architecture.

[Click on image for larger view.]

Figure 1. The Remote Server Management Tools (RSMT) and Microsoft Azure gateway architecture.

Among the management features now available with RSMT are Device Manager, Event Viewer, Firewall Roles, PowerShell, Processes, Registry Editor, Roles, and Features and Services. In the pipeline are Storage and Certificate Manager, according to Kriti Jindal, a program manager on Microsoft's Windows Server and Services team, in a short RSMT video posted here.

Bringing Windows Containers to Azure

An equally important new capability Microsoft has brought to Windows Server 2016 is the support for the Docker Engine, a commercial runtime environment of the open source container platform. Microsoft announced at Ignite that the Docker Engine will be included free of charge in Windows Server 2016. "This makes it incredibly easy for developers and IT administrators to leverage container-based deployments using Windows Server 2016." Guthrie said.

Among the first customers Microsoft has worked with to demonstrate how customers might use Docker containers in Windows Server 2016 and Azure is Tyco, a provider of fire safety and security systems that are used by 3 million commercial and residential customers worldwide, with 900 locations in 50 countries and 57 employees (not accounting for its just-completed $3.9 billion merger with Johnson Controls Inc.).

Daryll Fogal, the CIO of Tyco, joined Guthrie on stage during the Ignite keynote to explain how his company has started using the Docker engine in Windows Server 2016 to make apps more modular and expedite updates and then allow for modernization of legacy apps using micro-services. The proliferation of new sensors, readers, cameras and Internet of Things (IoT)-type monitoring devices is generating far more data than the existing three-tier, .NET-based security operations management platform (now used by 18,000 Tyco customers) can accommodate, according to a description of its work with Tyco posted by Microsoft.

"We think of Windows Server 2016 in many ways as the edge of our Azure cloud, and one of the things that we recommend you think of is Azure as the edge of all your on-premises servers."

Scott Guthrie, Executive VP, Microsoft Cloud and Enterprise Group

To provide a more scalable and elastic architecture Tyco migrated the components of the existing application into VMs. However, upon learning that Docker containers would be included in Windows Server 2016, it determined they'd be lighter-weight and more portable than VMs. That's because each container is an independent file with its own runtime including the app, all of its dependencies, libraries and configuration components, so it can run in any environment and not require the overhead of a monolithic app.

When the application was originally built, it didn't have IoT or globalization in mind, according to Fogal. While containerizing its legacy apps provided additional scalability, the plan was to take the legacy applications and refactor them into Azure micro-services, he said. "And then for all practical purposes we have infinite scalability," Fogal explained.

Azure will continue to be a bridge for Tyco, he added. "We've got all these legacy people, legacy applications on on-premises servers, and we need to bridge [them] to Infrastructure as a Service and ultimately to cloud," he said. "And what we've found is that the path that Microsoft has laid out for us has made that very easy."

Tyco officials claim it only took several days to change the .NET code into Docker files and the result is better app availability, along with consistency and control for developers, testers and deployment teams. Also, the DevOps group can push updates faster with minimal or no downtime. Most notably, the newly containerized app, which Tyco calls C-CURE 9000, now allows the company to run it anywhere, including Windows Server and Azure.

Docker Engine Inside Windows Server

Microsoft and Docker first got together more than two years ago and revealed plans to create a commercial version of the Docker Engine that would work in the Azure cloud, Windows Server and Hyper-V. It was a defining decision by Microsoft because it meant casting aside its own container development efforts and joining with the open source community and some of its key rivals, including Amazon Web Services Inc. (AWS), Google Inc., IBM Corp., Red Hat Inc. and VMware Inc.

With the launch of Windows Server 2016, the two companies also announced that the commercially supported version of Docker Engine embedded in the server OS is now included at no additional cost and supported by both companies. Microsoft will be taking the frontline tier 1 and tier 2 support, which will be backed by Docker. Though it's included at no additional charge, the Docker Engine technically isn't part of the Windows Server product or the license, says Mike Schutz, general manager of Microsoft's Cloud and Enterprise division.

"We want to make it available at no additional cost to customers so it really eases their use of the container technologies," Schutz says. "Part of it was our desire and belief that customers can really benefit from the container technologies in Windows, and because Docker is a leader in containerization and they have this Docker Engine capability that was available, in order to help accelerate customers getting the value of the containers in Windows Server, we thought this would be a great partnership."

Tyco's early work with Docker was a key impetus for deciding to extend the integration of Docker Engine with Windows Server 2016, Schutz explains. "They're a proof point and in many ways they were an inspiration for us to create this agreement with Docker because we saw how well they were able to become more agile within their development organization by using Windows Server and Docker together," Schutz says. "Now we're trying to replicate that through the breadth of our customer base."